OpenAI has recently unveiled a new language model called ChatGPT, which has the potential to revolutionise the way we interact with machines.

So much so, that it wrote the introduction to this article by itself.

Because in a daring move that I feared would leave me questioning my usefulness as a real-life human journalist, I decided to task the world’s new favourite chatbot with helping to write a story about itself.

And I really do mean the world’s new favourite: less than a week after its public launch, ChatGPT has amassed more than one million users.

While previous chatbots have been fairly restrained in their stated ambitions (Google’s LaMDA operating within extremely confined parameters), or quickly manipulated to become extremely offensive (Microsoft’s infamous Tay says hello, or probably something less polite), ChatGPT is displaying incredible range.

ChatGPT is a large language model that has been trained on a massive amount of text data, allowing it to generate human-like text in response to a given prompt.

And it wrote that paragraph as well, as part of my request for it to write an introduction to a news article about the potential of ChatGPT (from this point, let’s display its contributions in italics).

One of the key features of ChatGPT is its ability to generate long-form text.

Unlike some other language models, which are limited to generating short phrases or sentences, ChatGPT can generate text that is several paragraphs long.

This makes it well-suited for tasks such as generating news articles, stories, or even entire books.

Bold claims, but it can’t be denied that the results people have been getting from interacting with it have been far beyond anything seen from a publicly released chatbot before.

Guy Parsons shared how it had given him some mightily ambitious home decor ideas:

Amjad Masad was amazed by its ability to identify, explain and fix coding bugs:

Plenty see the potential in ChatGPT as a search engine alternative, or even replacement, given the detailed answers provided to many questions:

And Sky’s own Ed Conway commissioned it to write a column on quantitative easing:

As Ed rightly points out, the results are impressive but dry – a suspiciously sterile impersonation of a genuine newspaper column, rather than a convincing replacement.

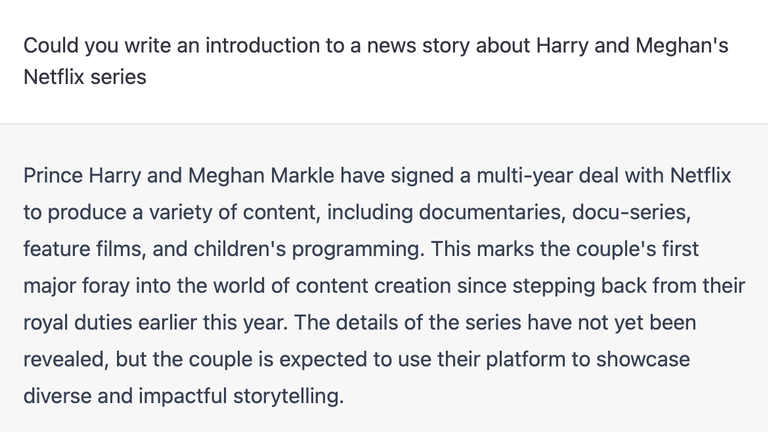

Asking for the top line for a story about Harry and Meghan’s Netflix series bears no mention of the current context, given ChatGPT’s training data only goes up until 2021.

Prince Harry and Meghan Markle have signed a multi-year deal with Netflix to produce a variety of content, including documentaries, docuseries, feature films, and children’s programming.

But for some background context, it does a pretty good job.

Asked why Harry and Meghan had stepped back from the Royal Family, ChatGPT had its story straight.

Prince Harry and Meghan Markle announced their decision to step back from their royal duties in January 2020.

In a statement, the couple cited their desire to become financially independent and to focus on their charitable endeavours as the main reasons for their decision.

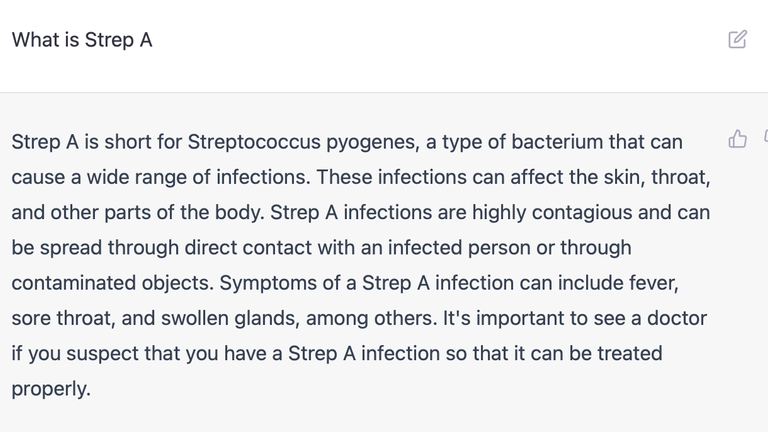

And asked for a definition of Strep A, it offered a legible response.

It’s important to see a doctor if you suspect that you have a Strep A infection so that it can be treated properly.

But it’s easy to see how AI like this could be abused, whether to indulge laziness or something more sinister.

Just as deepfakes have done for videos, the potential of an AI which can generate text barely discernible from a human will set alarm bells ringing that it could be exploited in bad faith.

As with any technology, AI has the potential to be used in bad faith by individuals or organisations with malicious intentions.

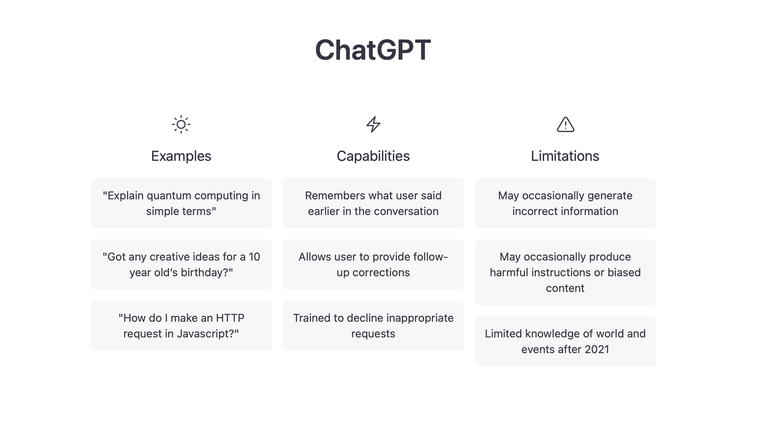

For its part, OpenAI says ChatGPT still has plenty of room to improve: answers can be “incorrect or nonsensical” – despite sounding legitimate in most cases – and it can also be “overly verbose” and “overuse certain phrases”.

And while the tech company has “made efforts to make the model refuse inappropriate requests, it will sometimes respond to harmful instructions or exhibit biased behaviour”.

Read more:

‘Don’t be afraid of AI’

‘I fell in love with my AI girlfriend’

AI ‘could help UK fight disinformation’

I ended our own conversation by asking it if thought I as a journalist needed to adapt to AI.

It is important for journalists to be aware of the advances in AI and how they can potentially impact the journalism industry. However, rather than needing to adapt to AI, it is more important for journalists to focus on honing their craft and staying up-to-date with the latest developments in their field.

Well, that’s me told.